Search engine optimization is all centered around one goal: ranking highly in Google search results to attract clicks. If you want to appear in Google’s organic results, your website has to be in the Google index.

The Google index is a database where URLs end up after being processed by Googlebot (Google’s robot). If Googlebot assesses that a given URL has the appropriate quality, then the address will be added to the index. If the URL does not receive a sufficient assessment, Google will decide not to index that URL.

With this in mind, the key to improved optimization is understanding what and how Google assesses under a given URL.

What is the minimum value for indexing?

Minimum Value to Index is a theory from around November 2022. We presented the theory in December at a Linkhouse webinar. In this webinar, we discussed how to create your own external link indexer link.

It says that for each element under a given URL, we earn specific points. If we achieve a certain number of points, Google will add that URL to the index. According to our theory, Googlebot scores each URL. For each ranking factor or signal, the site receives points. If it collects a minimum of, for instance, 100 points, Google adds such a site to the index. You can think of it like a sport or competition. At a dog show, for instance, multiple factors affect the final points, from appearance to agility. Only a few competitors will be selected for the finals based on their overall score. This is where it gets tricky: we don’t know what factors we receive points for or how many points we receive. We also aren’t sure of the most vital elements that impact whether a site is added to the Google index.

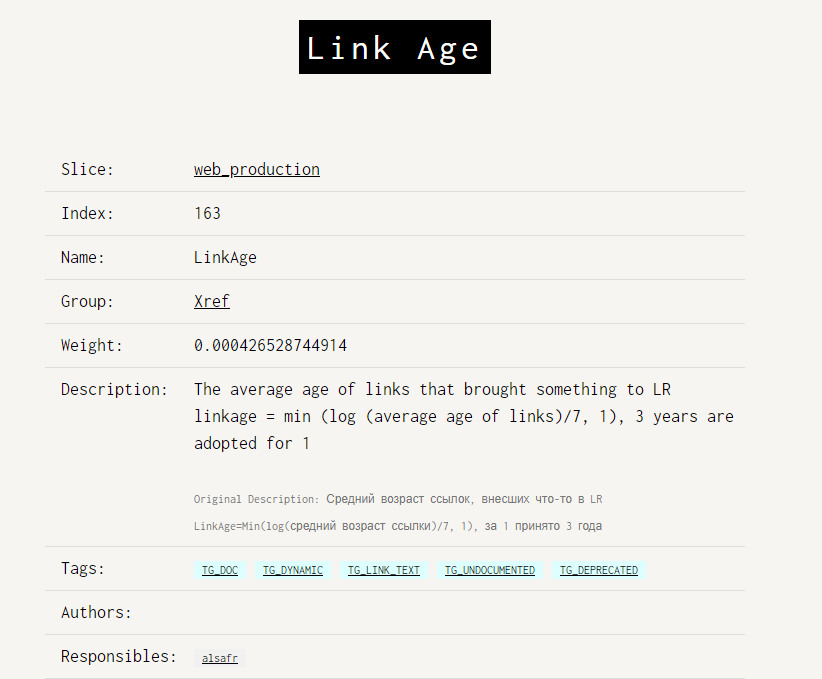

The Yandex code leak somewhat proves our theory. It reveals that for each factor in the algorithm, a website receives points. Some factors grant more points, while others grant less. The result is the sum of accumulated points, which isn’t fixed and can change over the lifespan of a website.

For example, the age of a link can affect its value. It is assumed that above 3 years, its value is set to 1.

So, what factors affect MVI?

The key to benefitting from MVI is knowing which factors make an impact on a website’s score. We divided ranking factors into those that concern a specific URL (also known as onURL) and those that affect a given URL from the outside (also known as offURL).

In addition, user signals (also known as URLsignals) can indicate the maintenance of a website in the index. External factors can be other URLs in the same domain and other domains.

OnURL Factors:

OnURL factors include:

- the entire indexed content on a given subpage (the header, footer, sidebar, and main content)

- the substantive value of the article (#fake)

- crawl speed of the URL (Time to First Byte with the retrieval of the entire html code)

- security, meaning the possession of SSL

- mobility, meaning adaptation to mobile devices

- the number of internal links

- the number of outgoing links from a given URL.

OffURL or OffPage Factors

OffURL factors include:

- the number and quality of internal links

- the number and quality of external links

- consolidation of duplicated URLs through the rel canonical tag

- the number of mentions found without links on the Internet (sitemap, URL in the website content)

- the value of the domain on which the URL is located

- server errors on the domain where the URL is located.

URLsignals or PageSignals Factors:

URLsignals factors may include:

- Core Web Vitals calculated based on user traffic (we observed this element in our own research)

- high Bounce Rate with low Dwell Time

- lower user engagement compared to the competition

- statistically lower CTR in search results

- reports from users that the site contains spam content or malicious software

- reports from users that the site is for adult viewers only

- frequency of changes / reindexing of a given URL.

MVI Research - Three Case Studies

We have already conducted several studies on the theory presented above. Below, we present three case studies that seem to indicate the importance of these factors in Google’s decision of whether a given URL should be added to the index.

Methodologically, we researched these factors by first trying to index the URL, including some deliberately made errors, using the sitemap or Indexing API. From there, if the address did not get into the index, we corrected this error and tried to re-index.

In most cases, from the initial crawling of the address, Googlebot needs from a few minutes to 24 hours (very rarely up to 48 hours) to process the URL and make a decision whether a given URL will be in the index.

SSL / HTTPS

We created an article on orphan pages (pages with no links), which we placed at the HTTP:// address without SSL. After repeatedly sending Googlebot to this address, it did not get into the index. After changing to HTTPS and sending to Googlebot, the address got into the index within just a few hours.

To test this factor further, we removed the URL from the index and returned to the HTTP:// address. The address did not get back into the index. Once again, after changing back to HTTPS, the address was quickly back in the index.

Mobility

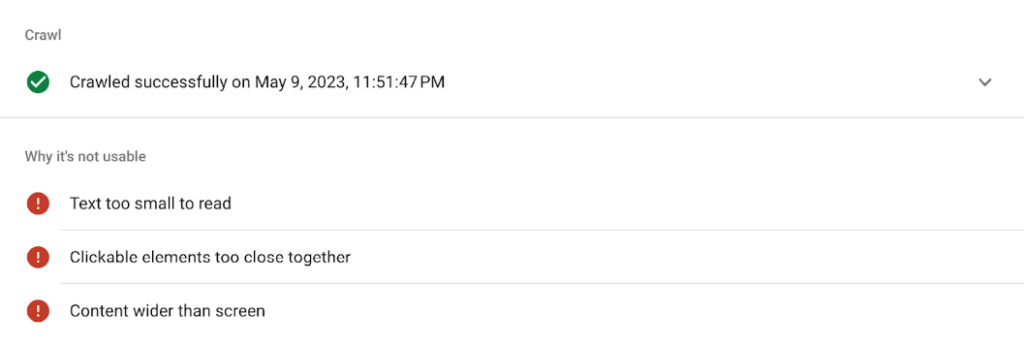

We created an article on orphan pages, in which we made two mistakes: we placed clickable elements too close together, and the content was wider than the screen.

After sending to Googlebot, the address was not placed into the index. After corrections, the article got into the index within a few hours of sending to Googlebot.

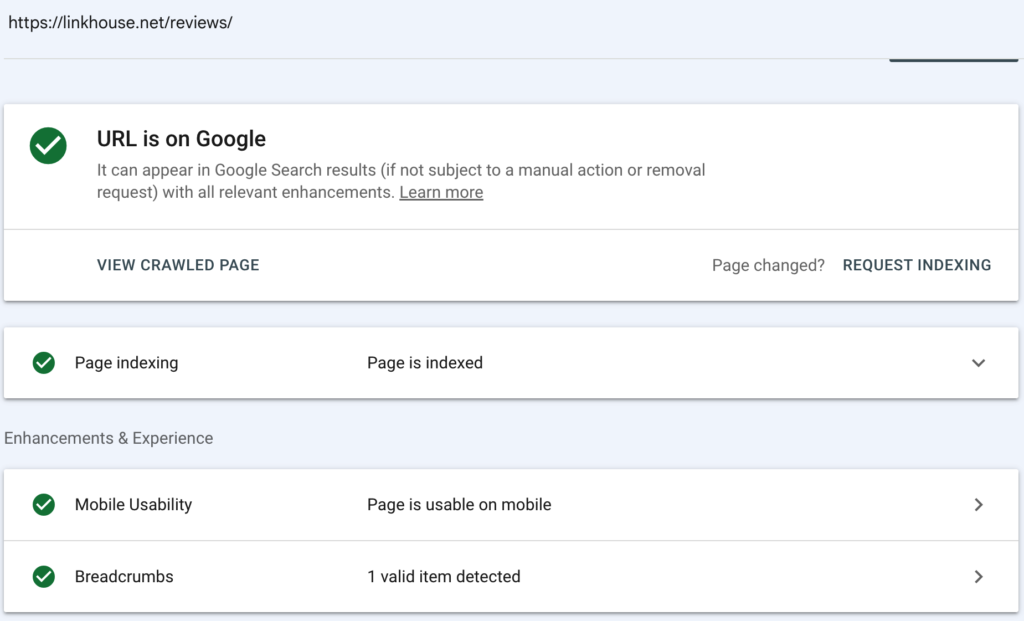

Additionally, the same problem appeared on the linkhouse.net service, where we had three issues with the review page: the font size was too small, the clickable elements were too close together, and the content was wider than the screen.

After fixing this issue, the page was indexed within a few hours after crawling.

The page now safely resides in the Google index!

External Links

In this case, we used a similar methodology to what we described in the previous examples. Unique articles without additional linking containing between 700 and 1200 characters were published. They didn’t make it to Google, but after additional external linking, each of them found their way into the index.

For us, this meant that the substantive value of the article wasn’t the best (on purpose). However, by adding an external factor like backlinks, the article gained value, and Google added it to its index.

How do you improve a URL’s score?

Based on the case studies we’ve gone over, there are a few key techniques you can use to ensure that your page meets the MVI.

Firstly, you’ll want to ensure that your URL is secure by utilising HTTPS, as Google seems to value this highly when considering whether or not to index a page. It will also create a safer experience for both you and your end users.

Next, you should take into consideration how your page performs on mobile devices. If this is neglected and your content isn’t optimised for the different screen size and touch controls, Google may penalize you by not accepting your page into the index. Therefore, this is an issue to talk over with your web designers as well as any SEO professionals.

Finally, and perhaps most importantly, you’ll want to make sure that your page has plenty of backlinks to improve the perceived value of the URL. You can do this in a number of ways, from writing additional blogs in-house to using Linkhouse services to populate your site with guest blogs or provide link insertions. However you choose to, adding external links to a page clearly has a meaningful impact on whether your page is indexed.

Summary - The Value of MVI

As we hope we’ve proven above, the Minimum Value to Index theory has clear foundations in Google’s system of processing and evaluating each crawled URL.

MVI is the reason behind why your URLs get into Google’s index or not. Therefore, it actually serves as one of the most important barriers to clear when it comes to optimizing your pages for Google searches!

After all, without meeting the indexing requirements, you won’t appear in organic results. So take care of technicalities, content, and external links – this last factor in particular helps to index URLs the fastest.

Good luck with your optimization – we’ll see you in the SERPs!

Author

Sebastian Heymann – SEO in his life has existed since 2011. Co-creator of the Link Planner tool. Creator of the only SEO training on the use of Google Search Console. He enjoys challenging, questioning, testing, and creating public debates. He’s really into search engine optimization and analyzing data, and he’s always eager to pass them along to others.